show () correlation_heatmap ( X_train ])īased on above results, I would say that it is safe to remove: ZN, CHAS, AGE, INDUS.

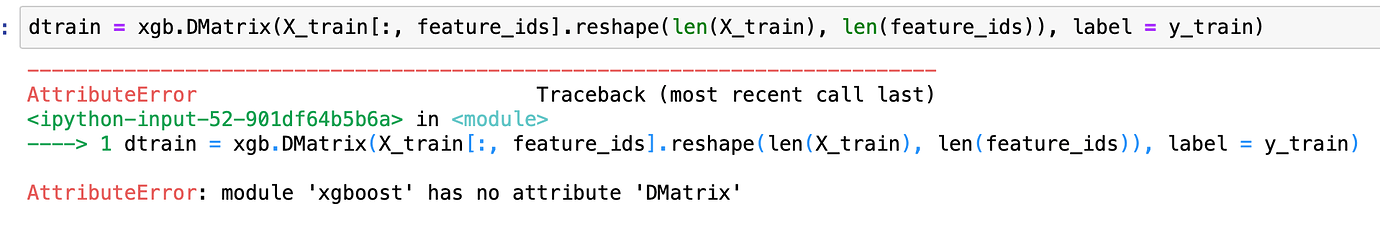

heatmap ( correlations, vmax = 1.0, center = 0, fmt = '.2f', cmap = "YlGnBu", square = True, linewidths =. The permutation importance for Xgboost model can be easily computed:ĭef correlation_heatmap ( train ): correlations = train. The features which impact the performance the most are the most important one. This permutation method will randomly shuffle each feature and compute the change in the model’s performance. It is available in scikit-learn from version 0.22. Yes, you can use permutation_importance from scikit-learn on Xgboost! ( scikit-learn is amazing!) It is possible because Xgboost implements the scikit-learn interface API. Permutation Based Feature Importance (with scikit-learn) There are also cover, total_gain, total_cover types of importance.This type of feature importance can favourize numerical and high cardinality features. The weight shows the number of times the feature is used to split data.The gain type shows the average gain across all splits where feature was used.You can check the type of the importance with xgb.importance_type. When you access Booster object and get the importance with get_score method, then default is weight. The default type is gain if you construct model with scikit-learn like API ( docs). There are several types of importance in the Xgboost - it can be computed in several different ways.xlabel ( "Xgboost Feature Importance" )Ībout Xgboost Built-in Feature Importance

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed